How to Hack an Iranian Nuclear Plant (A Lazy Hacker's Guide)

Disclaimer: This post is for educational purposes only. Attempting to hack nuclear facilities or critical infrastructure is extremely illegal, extraordinarily dangerous, and will land you in a very uncomfortable prison cell for a very long time. Don't be that person. Seriously.

Part 1: The Hypothetical "How-To" (For Entertainment Purposes)

So you've decided you want to hack a nuclear enrichment facility? Bold choice! Here's your totally-not-serious, absolutely-hypothetical guide to pulling off one of the most sophisticated cyber operations in history. (Again, DON'T ACTUALLY DO THIS.)

Step 1: Assemble Your Ocean's Eleven (Cyber Edition)

First things first – you're going to need a team. And not just any team. We're talking nation-state level talent here:

The Dream Team:

- The Exploit Developer – This person eats zero-day vulnerabilities for breakfast. They need to find security flaws in Windows that Microsoft doesn't even know exist yet. Preferably four of them. You know, just in case.

- The SCADA Specialist – Someone who understands industrial control systems better than the engineers who built them. They should be able to recite Siemens PLC documentation in their sleep and have strong opinions about ladder logic.

- The Social Engineer – The smooth talker who can convince anyone to plug in a random USB stick they found in the parking lot. Bonus points if they can do it without raising suspicion.

- The Intelligence Analyst – Someone who knows the target facility's layout, personnel, supply chain, and probably what the security guard had for lunch on Tuesday. Google Earth skills are mandatory.

- The Malware Architect – A coding genius who can build self-replicating worms that are stealthier than a ninja and more persistent than your mom asking when you're getting married.

- The Exfiltration Specialist – Because even the best hack is pointless if you can't get your payload into an air-gapped facility. This person specializes in creative delivery methods.

Budget: Oh, and you'll need a budget. A BIG budget. We're talking millions of dollars, access to government-level resources, and probably a few years of research and development. No pressure!

Step 2: Research Your Target (The Stalker Phase)

You can't just waltz into a nuclear facility and start clicking random buttons. You need intelligence. LOTS of intelligence.

What You Need to Know:

- Facility Layout – Where are the centrifuges? What's the network topology? Where do contractors park? Is there a Starbucks nearby? (Important for parking lot USB drops.)

- Equipment Details – What specific models of PLCs (Programmable Logic Controllers) are they using? Siemens S7-300 and S7-400 series? Perfect. Write that down.

- Operating System – Are they running Windows XP embedded systems? (Spoiler: They probably are, because critical infrastructure loves outdated software.)

- Air Gap Status – Is the network connected to the internet? No? Okay, time to get creative with physical access.

- Supply Chain – Who are their contractors? Who repairs their equipment? Who delivers their replacement parts? These are your potential insertion points.

- Personnel – Who works there? What are their routines? Do they smoke? (Smokers are great targets – they go outside frequently and are less observant.)

Methods:

- Open-source intelligence (OSINT) – Public records, LinkedIn stalking, satellite imagery

- Human intelligence (HUMINT) – Bribing insiders, befriending engineers at conferences

- Signals intelligence (SIGINT) – Intercepting communications (requires fancy government toys)

- Dumpster diving – Never underestimate what people throw away

Step 3: Build Your Super Weapon (The Coding Marathon)

Now comes the fun part – building malware so sophisticated that antivirus companies will be studying it for years.

Your Malware Needs:

Component 1: The Delivery Worm

- Must spread via USB drives (classic!)

- Should exploit zero-day vulnerabilities in Windows

- Needs to propagate across local networks

- Must be stealthy enough to avoid detection by antivirus

Component 2: The Payload

- Specifically targets Siemens STEP 7 software (the programming environment for PLCs)

- Can intercept communications between PLC and HMI (Human-Machine Interface)

- Modifies PLC code to sabotage physical processes

- Hides evidence by replaying "normal" sensor data to operators

Component 3: The Rootkit

- Hides files, processes, and registry keys

- Uses stolen digital certificates to appear legitimate

- Evades behavioral analysis

- Self-destructs after a certain date (clean up after yourself!)

The Exploit Shopping List:

You'll need some zero-days. At least four should do it:

- LNK File Handling Vulnerability (CVE-2010-2568) – Executes code when you simply view a folder containing a specially crafted .LNK file. No clicking required!

- Print Spooler Vulnerability (CVE-2010-2729) – Allows privilege escalation and remote code execution via shared printers.

- Task Scheduler Vulnerability (CVE-2010-3338) – Another privilege escalation vector.

- Network Share Vulnerability (CVE-2008-4250) – Helps with spreading across networks.

Pro Tips:

- Use multiple propagation methods (USB, network shares, print spoolers)

- Make it polymorphic (changes its signature to avoid detection)

- Add legitimate digital signatures (steal them from Realtek and JMicron if you can)

- Make the payload activate only when it detects the exact target hardware

Estimated Development Time: 6-12 months with a team of elite programmers. No big deal.

Step 4: Get Physical Access (The Social Engineering Phase)

Here's the thing about air-gapped networks: they're great for security, but humans are still the weakest link. You need to get your malware onto a computer inside the facility.

Method 1: The Parking Lot Drop

Step into the parking lot of a company that does business with the nuclear facility. Drop several USB sticks labeled "Salary Information Q4 2009" or "Confidential - Executive Photos" near employee vehicles. Human curiosity will do the rest.

Method 2: The Contractor Infiltration

Target a contractor or supplier who has access to the facility. Infect their systems first, so when their maintenance person connects a laptop to the facility's network for legitimate work, your malware hitches a ride.

Method 3: The Infected Equipment

If you have serious resources, infiltrate the supply chain. Get your malware pre-installed on replacement parts or equipment being delivered to the facility.

Method 4: The Inside Job

Recruit or compromise an insider. This is the hardest but most reliable method. Requires significant social engineering or coercion.

The Psychology:

- People trust USB devices that look official

- Curiosity is powerful ("What's on this mysterious USB stick?")

- Contractors assume company-issued equipment is safe

- Most people don't think "nation-state cyber weapon" when they see a USB drive

Step 5: Deploy and Pray (The Moment of Truth)

Your malware is ready. Your delivery mechanism is in place. Now you wait.

What Happens Next:

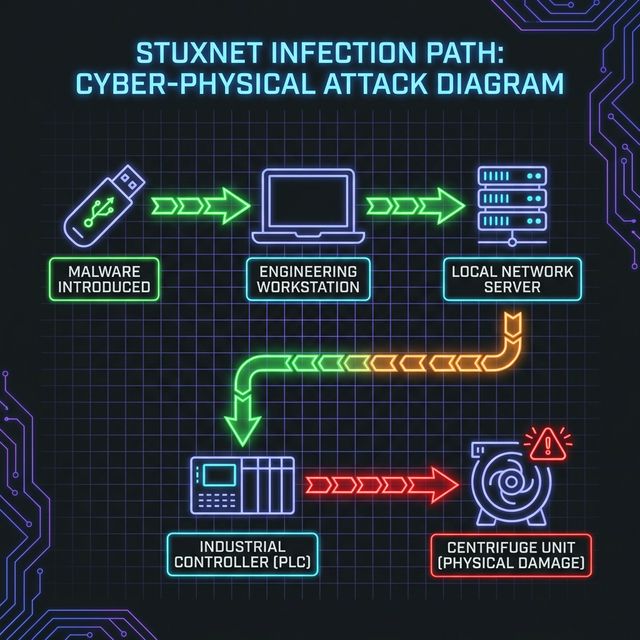

- Initial Infection – Someone plugs in the USB stick or connects an infected laptop.

- Lateral Movement – The worm spreads across the network, looking for its target.

- Target Identification – The malware scans for Siemens STEP 7 software and specific PLC configurations.

- Payload Activation – Once it finds the exact PLCs controlling the centrifuges, it springs into action.

-

Sabotage

– The malware alters the PLC code to:

- Spin centrifuges at dangerous, unstable speeds

- Then slow them down suddenly

- Repeat this cycle to cause mechanical stress and failure

- All while showing "normal" readings to operators

- Cover-up – The malware intercepts sensor data and replays previously recorded "normal" values, so operators don't realize anything is wrong until centrifuges start physically falling apart.

What Could Go Wrong:

- Detection before reaching the target

- Antivirus catching it

- Operators noticing unusual behavior

- The malware spreading too far and being discovered in the wild (oops!)

- International incident and geopolitical crisis

Step 6: Watch the Fireworks (From a Safe Distance)

If everything goes according to plan:

- Centrifuges start vibrating abnormally

- Bearings fail

- Rotors get damaged

- Engineers are confused because all the monitoring systems say everything is fine

- Months of enrichment work destroyed

- Iran's nuclear program set back by years

- You've just pulled off the most sophisticated cyber attack in history

Congratulations! You're now either a national hero or an international war criminal, depending on who you ask.

Part 2: The Real Story (Because Truth Is Stranger Than Fiction)

Everything you just read? That actually happened. Well, sort of. Let me tell you about Stuxnet – the cyber weapon that changed everything.

The Discovery: "Wait... What Is This Thing?"

June 2010, Belarus

A small antivirus company called VirusBlokAda in Belarus was investigating why some of their clients' computers kept crashing. They discovered something... weird. Really weird.

The malware they found was unlike anything they'd seen before:

- Massive complexity (over 15,000 lines of code)

- Multiple zero-day exploits (FOUR of them!)

- Stolen digital certificates from legitimate companies

- Targeted very specific industrial control systems

- Seemed designed to sabotage physical equipment

Sergey Ulasen, the researcher who first analyzed it, reportedly said something along the lines of: "This is not normal malware. This is something else entirely."

The Investigation: Reverse Engineering a Masterpiece

Security researchers from Symantec, Kaspersky Lab, and other firms spent months dissecting Stuxnet. What they found was absolutely bonkers:

The Technical Marvel:

- Four Zero-Day Exploits – Unpatched vulnerabilities in Windows that Microsoft didn't know about. Zero-days are rare and expensive. Having four in one piece of malware was unprecedented.

- Stolen Digital Certificates – The malware was signed with legitimate certificates stolen from Realtek and JMicron, both Taiwanese tech companies. This made Windows trust the malware as if it were legitimate software.

- Two Rootkits – One for 32-bit Windows, one for 64-bit. Both designed to hide the malware's presence from antivirus and system administrators.

- PLC Payload – Code specifically designed to reprogram Siemens S7 PLCs. This was the smoking gun that proved Stuxnet wasn't just stealing data – it was sabotaging physical equipment.

The Propagation Methods:

Stuxnet spread like wildfire, but with surgical precision:

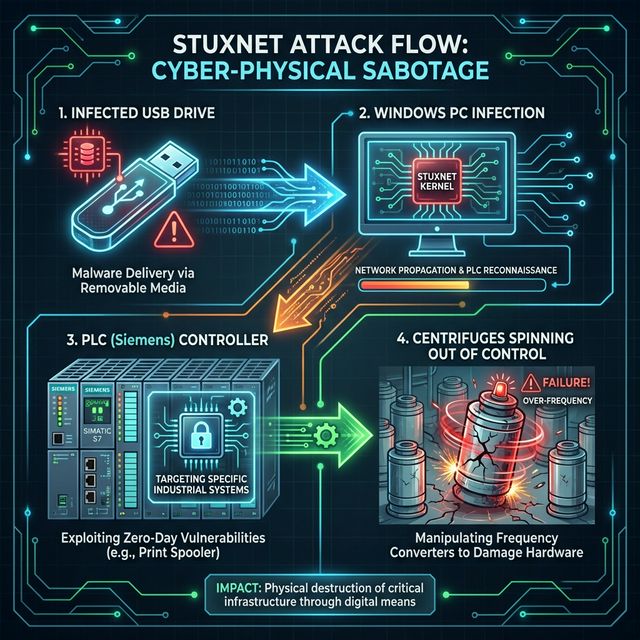

- USB Drives – The primary infection vector. LNK file vulnerability meant just opening a folder would execute the malware.

- Network Shares – Spread across local networks automatically.

- Print Spooler – Used printer sharing to propagate.

- WinCC Database – Targeted Siemens' industrial software specifically.

The Genius Part: Stuxnet was a worm, but it didn't act like typical malware. Most malware tries to infect everything. Stuxnet had a mission. It spread widely to find its way into air-gapped networks, but only activated its destructive payload when it found EXACTLY the right conditions.

The Target: Natanz Fuel Enrichment Plant

As researchers dug deeper, the target became clear: Iran's Natanz nuclear facility.

Why Natanz?

Natanz is where Iran enriches uranium using thousands of centrifuges. These centrifuges spin at incredibly high speeds (supersonic!) to separate uranium isotopes. They're delicate, expensive, and take years to install.

What Stuxnet Did:

The malware had an incredibly specific target:

- Scanning Phase – Stuxnet spread through Iranian networks looking for computers running Siemens STEP 7 software (used to program PLCs).

- Fingerprinting – It checked PLC configurations to find ones controlling centrifuges with Vacon or Fararo Paya frequency converter drives (the exact models Iran used).

-

Payload Activation

– When it found the right PLCs, Stuxnet:

- Recorded normal sensor data

- Modified PLC code to alter centrifuge speeds

- Replayed the recorded "normal" data to operators

The Sabotage:

Stuxnet made centrifuges do something they should never do:

- Phase 1: Increase rotor speed from normal (1,064 Hz) to 1,410 Hz for 15 minutes

- Phase 2: Drop speed to 2 Hz for 50 minutes

- Repeat – This cycle caused enormous mechanical stress

Imagine you're driving a car and someone forces you to floor it to 150 mph, then slam on the brakes, then floor it again, then slam the brakes – for months. Your car is going to break.

That's exactly what happened to Iran's centrifuges. Except operators had no idea because their screens showed everything was fine.

The Results:

- Approximately 1,000 centrifuges destroyed (about 20% of Natanz's total)

- Iran's nuclear program delayed by months or years

- Engineers couldn't figure out what was wrong (mysterious failures!)

- When they replaced broken centrifuges, Stuxnet sabotaged those too

The Attribution: Who Did This?

Stuxnet had no "Made by [Country Name]" stamp, but the evidence pointed in a pretty obvious direction.

The Clues:

- Complexity – Only nation-states have resources for this level of sophistication.

- Zero-Days – Four zero-days represent millions of dollars in development or black market purchases.

- Target Specificity – Knew exact details of Iran's centrifuge configuration.

-

Code References

– Researchers found biblical references in the code:

- String "Myrtus" (possibly referring to Queen Esther, who saved Jews from Persian destruction)

- Malware had a "kill date" of June 24, 2012 (some speculated connection to historical events)

- Motivation – Who wants to stop Iran's nuclear program? Short list: Israel and the United States.

The Confirmation:

While neither country officially admitted responsibility, various sources confirmed what everyone suspected:

- New York Times (2012) – Reported that "Olympic Games" (the codename) was a joint US-Israel operation.

- Documentary "Zero Days" (2016) – Anonymous intelligence sources confirmed involvement.

- Barack Obama – Implied confirmation when he expanded the program in his first term.

The Players:

- NSA (National Security Agency) – Provided exploits and technical expertise

- Unit 8200 – Israeli military intelligence unit, SCADA expertise

- CIA – Intelligence gathering and coordination

- Programmers – Teams from both countries collaborated

The Spread: Oops, It Got Out

Here's where things get messy. Stuxnet was supposed to stay contained within Iranian networks. It didn't.

The Problem:

Stuxnet spread too well. It infected over 100,000 computers worldwide:

- Iran (obviously) – ~60% of infections

- Indonesia – 18%

- India – 9%

- United States, Pakistan, Germany, China, and dozens of other countries

Why It Spread:

- Aggressive Propagation – Needed to spread widely to find paths into air-gapped networks

- USB Drives – People travel, USBs get shared

- No Kill Switch – Once released, it kept spreading

- Updates – Later variants (2009-2010) were even more aggressive

The Discovery:

The worldwide spread is actually what led to Stuxnet's discovery. If it had stayed contained in Iran, we might never have known about it. Engineers in Belarus investigating unrelated crashes stumbled onto it.

The Irony:

The very feature that made Stuxnet successful (aggressive spreading to penetrate air-gaps) also ensured it would be discovered. A too-successful worm doomed itself to exposure.

The Technical Deep Dive: How It Actually Worked

Let's get into the nitty-gritty details that made Stuxnet a technical marvel.

Stage 1: Initial Infection (The USB Trick)

The attack chain started with infected USB drives, likely delivered via:

- Contractors working at Natanz

- Supply chain infiltration

- Targeted contractors in Europe who supplied equipment

CVE-2010-2568 (LNK File Vulnerability):

This was the crown jewel exploit. Here's why it was so devastating:

Normal behavior: Click an .LNK shortcut → Program launches

Stuxnet behavior: View a folder containing malicious .LNK → Code executes automatically

No user interaction needed beyond opening a folder. The .LNK file pointed to a DLL that had malicious code. Windows helpfully executed it.

Stage 2: Privilege Escalation

Once on a system, Stuxnet needed admin rights. It used:

- CVE-2010-2729 – Print Spooler vulnerability for local privilege escalation

- CVE-2010-3338 – Task Scheduler vulnerability

These allowed Stuxnet to gain SYSTEM-level privileges, giving it complete control.

Stage 3: Rootkit Installation

Stuxnet installed rootkits to hide:

- Files (drivers and configuration files)

- Processes (running malware)

- Registry keys (persistence mechanisms)

It used stolen digital certificates from Realtek (later JMicron when Realtek's was revoked) to appear legitimate. Windows said: "This is signed software from a trusted vendor, everything's fine!"

Stage 4: Lateral Movement

Stuxnet spread across networks using:

- Network Shares – Copied itself to shared folders

- CVE-2008-4250 – Windows Server Service vulnerability for remote exploitation

- Print Spooler Sharing – Exploited printer sharing to reach other computers

- WinCC Database – Targeted Siemens' SCADA software database with hardcoded passwords (Seriously, Siemens? Default passwords?)

Stage 5: Target Identification

Stuxnet wasn't interested in most computers. It looked for:

- Windows systems running Siemens STEP 7 software (PLC programming environment)

- Specific PLC models : Siemens S7-300 and S7-400

- Exact configuration : Frequency converters from Iran Electro Sanat Fararo Paya (or Vacon)

- Centrifuge cascade : 164 centrifuges arranged in specific configuration

This was incredibly specific targeting. It's like building a missile that only explodes if it's in a specific room of a specific building.

Stage 6: Payload Deployment (The Sabotage)

When Stuxnet found its target, it did something brilliant and terrifying:

Man-in-the-Middle Attack on Industrial Control:

Normal Flow:

Operator HMI → STEP 7 Software → PLC → Centrifuges

↑

Sensor Feedback

Stuxnet Flow:

Operator HMI → STEP 7 Software (infected) → PLC (reprogrammed) → Centrifuges (sabotaged)

↑

Recorded "Normal" Data (fake)

The Attacks:

Researchers identified two main attack sequences:

Attack Sequence A (Early 2009):

- Target: January 2009 operations

- Method: Manipulate valve control

- Period: 13-day attack windows

- Goal: Disrupt cascade pressure

Attack Sequence B (Later 2009-2010):

- Target: Centrifuge rotor speed

- Method: Frequency converter manipulation

- Changes rotor from 1,064 Hz to 1,410 Hz (too fast)

- Then drops to 2 Hz (effectively stopped)

- Duration: 27-day cycles with 15-minute high-speed bursts

The Deception:

While sabotaging, Stuxnet:

- Recorded 21 seconds of normal sensor data

- Played this loop back to monitoring systems

- Made operators think everything was fine

- Centrifuges were destroying themselves while screens showed green checkmarks

Imagine a doctor showing you perfect heartbeat readings while you're actually having a heart attack. That's what Stuxnet did.

The Impact: Changing Warfare Forever

Stuxnet wasn't just a cool hack. It fundamentally changed how we think about security and warfare.

Immediate Effects:

- Physical Damage – First malware to cause real-world physical destruction

- Nuclear Delay – Set back Iran's enrichment program significantly

- Psychological – Iranian engineers couldn't trust their own systems

Long-Term Consequences:

1. Cyber Warfare Precedent

Stuxnet proved nation-states could use cyber weapons for physical sabotage. It opened Pandora's Box:

- If the US can sabotage centrifuges, Russia can sabotage power grids

- If Israel can halt nuclear programs, China can destroy factories

- If it works on PLCs, it works on dams, refineries, hospitals, water treatment...

2. Proliferation of Techniques

Stuxnet's code was available for analysis. Copycat malware started appearing:

- Duqu (2011) – Intelligence gathering, stuxnet variant

- Flame (2012) – Massive cyber-espionage tool

- Shamoon (2012) – Destroyed 30,000 Saudi Aramco computers

- Havex (2013) – Targeted industrial control systems

- BlackEnergy (2015) – Attacked Ukraine's power grid

3. Industrial Security Wake-Up Call

Critical infrastructure operators realized they were woefully unprepared:

- Air gaps don't work (Stuxnet proved it)

- Default passwords are suicide (Siemens learned this painfully)

- Outdated software is a disaster waiting to happen

- Supply chain security is critical

4. Geopolitical Tensions

- Demonstrated cyber warfare capabilities

- Established cyber attacks as legitimate military option

- Created international precedent for offensive cyber operations

- Raised questions about cyber arms control (there isn't any)

The Lessons Learned: What Stuxnet Teaches Us

Lesson 1: Air Gaps Are a False Sense of Security

The Myth: "If it's not connected to the internet, it's safe."

The Reality: Stuxnet laughed at air gaps. How?

- Human Vectors – USB drives, laptops, contractors

- Supply Chain – Infected equipment before delivery

- Social Engineering – Curiosity killed the air gap

Modern Implications:

Air-gapped networks exist across critical infrastructure:

- Nuclear power plants

- Military systems

- Industrial control systems

- Financial institutions (high-security areas)

If your security strategy relies solely on air gaps, you're in trouble.

Better Approach:

- Defense in depth (multiple layers)

- Physical security (control who accesses USB ports)

- Device control (waitlist allowed USB devices)

- Monitoring and anomaly detection

- Assume breach mentality

Lesson 2: Humans Are the Weakest Link

Every. Single. Time.

Stuxnet's Success Factors:

- Someone plugged in a USB drive (curiosity)

- Contractors trusted equipment (assumption of safety)

- Engineers used default passwords (laziness)

- Operators didn't question anomalies (training failure)

Statistics:

- 90% of successful attacks involve human error

- Phishing success rates: 10-30%

- People WILL plug in USB drives they find (studies prove it)

The Fix:

Security awareness training that actually works:

- Regular phishing simulations

- Make it engaging (not boring compliance videos)

- Reward good behavior

- Create a security-conscious culture

- Make reporting easy and blame-free

Lesson 3: Complexity Is the Enemy of Security

Stuxnet demonstrates this perfectly:

Problems:

- Siemens PLCs had hardcoded default passwords

- Windows had four zero-day vulnerabilities

- Digital certificates were stolen and not monitored

- Industrial systems ran outdated software

- Network segmentation was insufficient

The more complex your system, the more attack surface you expose.

Principle of Least Complexity:

- Minimize code (less code = fewer bugs)

- Reduce dependencies (fewer third-party vulnerabilities)

- Simplify architecture (easier to secure and monitor)

- Regular security audits

Lesson 4: Detection Is as Important as Prevention

Even if Stuxnet got in, better monitoring could have caught it earlier:

Red Flags That Were Missed:

- Unusual USB activity

- Rootkit installation

- Network propagation patterns

- PLC code modifications

- Physical centrifuge failures with "normal" readings

Modern Security:

Shift from prevention-only to assume breach:

- Endpoint Detection and Response (EDR)

- Security Information and Event Management (SIEM)

- Anomaly detection with AI/ML

- Behavioral analysis

- Threat hunting teams

Specifically for Industrial Systems:

- Monitor PLC code changes

- Log all USB connections

- Detect unusual network traffic patterns

- Physical sensor redundancy (can't fake all sensors)

- Out-of-band monitoring (separate systems watching the watchers)

Lesson 5: Supply Chain Security Is Critical

Stuxnet likely infiltrated via contractors and suppliers.

Attack Vectors:

- Infected laptops from maintenance contractors

- Compromised USB drives from equipment suppliers

- Pre-infected replacement parts

- Trusted third-party software updates

Modern Examples:

- SolarWinds (2020) – Compromised software updates infected thousands

- NotPetya (2017) – Spread via Ukrainian tax software update

- CCleaner (2017) – Popular utility had backdoored version distributed

Protection Strategies:

- Vet all suppliers and contractors

- Verify software signatures and hashes

- Isolate contractor access

- Monitor third-party activity

- Have incident response plans for supply chain compromise

Lesson 6: Security Through Obscurity Doesn't Work

Iran probably thought:

- "Who would spend millions targeting our specific PLCs?"

- "Our systems are proprietary and undocumented"

- "Attackers can't possibly know our exact configuration"

They were wrong on all counts.

The Reality:

If you're a valuable target, motivated attackers will:

- Spend millions on reconnaissance

- Reverse engineer your "proprietary" systems

- Recruit insiders

- Buy intelligence from sources you didn't know existed

Better Security:

Assume attackers know everything about your system. Design security accordingly:

- Defense in depth

- Zero-trust architecture

- Privilege minimization

- Strong authentication

- Continuous monitoring

Lesson 7: Physical and Cyber Security Must Converge

Stuxnet blurred the lines between cyber and physical security.

Traditional Separation:

- IT Security: Firewalls, antivirus, network monitoring

- Physical Security: Guards, locks, cameras

Stuxnet's Lesson:

Cyber attacks can cause physical destruction:

- Centrifuges destroyed

- Equipment damaged

- Facility partially disabled

Other Examples:

- Ukraine Power Grid (2015) – Cyber attack caused blackouts

- Triton/Trisis (2017) – Targeted Saudi petrochemical safety systems

- Oldsmar Water Treatment (2021) – Hacker tried to poison water supply

Integrated Security:

Modern critical infrastructure needs unified security:

- Cybersecurity teams working with engineers

- Physical security integrated with network monitoring

- Incident response plans covering both domains

- Training for both IT and operations personnel

The Legacy: Living in a Post-Stuxnet World

We can divide cybersecurity history into two eras: Before Stuxnet (BS) and After Stuxnet (AS).

Before Stuxnet:

- Malware mostly for profit (ransomware, banking trojans)

- Nation-states focused on espionage

- Air gaps considered reliable

- Industrial systems largely ignored by attackers

- Physical sabotage required physical presence

After Stuxnet:

- Cyber weapons enable physical destruction

- Nation-states have offensive cyber warfare capabilities

- Air gaps are bypassed regularly

- Critical infrastructure is a primary target

- Attribution is difficult but consequences are real

- Cyber deterrence becomes geopolitical issue

The Questions It Raised:

- Legal: Is a cyber attack an act of war? When is retaliation justified?

- Ethical: Is sabotaging a nuclear program better than military strikes? What about collateral damage?

- Technical: How do we secure systems that were never designed for internet threats?

- Strategic: Should there be international cyber weapons treaties? (Spoiler: Good luck enforcing those)

The Arms Race:

Stuxnet kicked off a cyber arms race:

- Every major nation now has offensive cyber capabilities

- Exploit markets thrive (zero-days sell for millions)

- Cyber units are part of military structure

- Defense budgets include cyber warfare

- Attribution problems make deterrence difficult

Conclusion: The Hackers Nobody Saw Coming

So there you have it – the wildest cyber attack story ever told, from satirical "how-to" to sobering reality.

Stuxnet proved that:

- Code can destroy physical infrastructure

- Air gaps don't protect against determined attackers

- Nation-states play a very different game than criminal hackers

- Industrial systems need security, not just functionality

- Human error opens doors that technology can't close

The Uncomfortable Truth:

Stuxnet was a warning. A proof of concept. If skilled attackers can destroy centrifuges in Iran, they can:

- Shut down power grids

- Poison water supplies

- Disable hospitals

- Crash financial systems

- Destroy manufacturing plants

And unlike Stuxnet, which had a specific political/military objective, imagine what happens when:

- Criminal groups get similar capabilities (ransomware with physical consequences)

- Terrorist organizations acquire these tools

- Cyber weapons proliferate to less responsible actors

- Automated attacks use AI to choose targets

The Hope:

Awareness is step one. Stuxnet forced:

- Critical infrastructure operators to take security seriously

- Governments to invest in defensive capabilities

- Researchers to study industrial system security

- Standards bodies to create better security guidelines

- Software vendors to (slowly) patch vulnerabilities

Final Thoughts:

The next "Stuxnet" is probably already out there, undiscovered, targeting systems we haven't thought to protect. Or maybe it's being developed right now by someone who read this article and thought "I could do better."

Either way, security isn't just about protecting data anymore. It's about protecting physical safety, national infrastructure, and potentially lives.

So next time you see a random USB stick in a parking lot, maybe – just maybe – don't plug it in.

Stay safe out there, hackers. And remember: with great power comes great responsibility, and potentially an international incident.

Further Reading & Resources

Documentaries:

- Zero Days (2016) – Alex Gibney's excellent documentary on Stuxnet

- Darknet Diaries – Episode 29: "Stuxnet" podcast

Books:

- Countdown to Zero Day by Kim Zetter – The definitive Stuxnet book

- Dark Territory by Fred Kaplan – Cyber warfare history

Technical Analysis:

- Symantec Stuxnet Dossier – Detailed technical breakdown

- Kaspersky Lab Report – Comprehensive vulnerability and payload analysis

- Langner Communications – Industrial control systems perspective

Academic Papers:

- "To Kill a Centrifuge" by Ralph Langner

- IEEE papers on SCADA security post-Stuxnet

Mitigation Frameworks:

- NIST Cybersecurity Framework for Critical Infrastructure

- IEC 62443 – Industrial security standards

- NERC CIP – Critical Infrastructure Protection standards

Remember: This article is educational. Learn from Stuxnet to DEFEND, not to ATTACK. The world has enough problems without you adding to them. Be the security professional who prevents the next Stuxnet, not the criminal who creates it.

Now go forth and patch your systems. And for the love of all that is holy, CHANGE YOUR DEFAULT PASSWORDS.